Synthesizing Post-Disaster Street Views from Satellite Imagery via Generative Vision Models

Accepted at IEEE IGARSS 2026

Ground-level street-view imagery is critical for post-disaster damage assessment, yet is often unavailable immediately after events due to access constraints. This project benchmarks whether realistic and semantically consistent street views can be synthesized from satellite imagery, and evaluates how different generative paradigms perform under this cross-view setting.

| Method | Paradigm | Key Characteristic |

|---|---|---|

| Pix2Pix | Conditional GAN | Preserves spatial layout; prone to texture blur |

| SD 1.5 + ControlNet | Diffusion | High realism; may underestimate fine-grained damage |

| ControlNet + VLM (Gemini) | Diffusion + Semantic | Damage-aware; possible semantic–geometry tension |

| Disaster-MoE | Mixture of Experts | Severity-adaptive; decouples structure from texture |

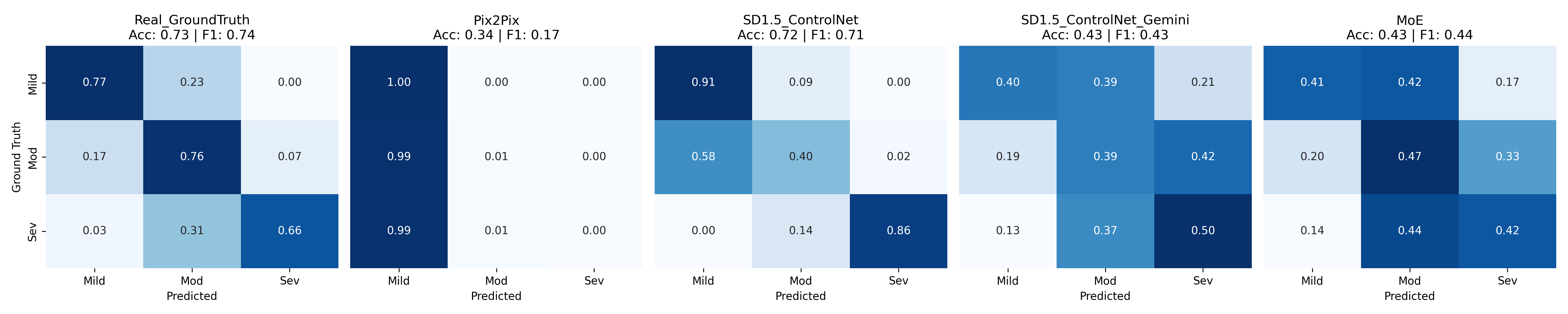

- SD 1.5 + ControlNet achieves the highest semantic consistency (F1 ≈ 0.71)

- Pix2Pix exhibits strong mode collapse toward mild damage predictions

- Gemini-guided and MoE improve visual realism with a slight trade-off in class separability

@article{yang2026satellite,

title = {Satellite-to-Street: Synthesizing Post-Disaster Views from Satellite

Imagery via Generative Vision Models},

author = {Yang, Yifan and Zou, Lei and Jepson, Wendy},

journal = {arXiv preprint arXiv:2603.20697},

year = {2026}

}Supported by the Texas A&M University Environment and Sustainability Initiative (ESI) through the Environment and Sustainability Graduate Fellow Award.

Yifan Yang — Department of Geography, Texas A&M University yyang295@tamu.edu · rayford295.github.io