diff --git a/mkdocs_rt.yml b/mkdocs_rt.yml

index d31a8e8..07df3b6 100644

--- a/mkdocs_rt.yml

+++ b/mkdocs_rt.yml

@@ -83,37 +83,45 @@ extra:

nav:

- Getting Started: ./getting_started.md

- - Motion Commands: ./jogging.md

- - Robot Drivers: ./robot_drivers.md

- # - Writing Nodes: ./writing_nodes.md # TODO

- - Navigation with Nav2:

+ - ROS2 Basics:

+ - Creating Packages & Nodes: ./writing_nodes.md

+ - Introduction to ROS2 Client (rclpy): ./intro_to_ros2.md

+ - Introduction to HelloNode: ./intro_to_hellonode.md

+ - Simulation Tutorial: ./stretch_simulation.md

+ - Teleoperation: ./teleoperating_stretch.md

+ - RViz Tutorial: ./rviz_basics.md

+ - Follow Joint Trajectory & Joint States: ./follow_joint_trajectory.md

+ - Motion Commands via hello_utils: ./jogging.md

+ - Robot Driver: ./robot_drivers.md

+ - Twist Control: ./twist_control.md

+ - Sensors Tutorial: ./sensors_tutorial.md

+ - Nav Stack Tutorial:

- Overview: ./navigation_overview.md

- Nav2 Basics: ./navigation_stack.md

- Nav2 Simple Commander: ./navigation_simple_commander.md

- # - Manipulation with MoveIt2: # TODO

- # - MoveIt Basics: ./moveit_basics.md # TODO

- # - MoveIt with RViz: ./moveit_rviz_demo.md # TODO

- # - MoveGroup C++ API: ./moveit_movegroup_demo.md # TODO

- - FUNMAP: https://github.com/hello-robot/stretch_ros2/tree/humble/stretch_funmap#overview

- # - Mapping: ./writing_nodes.md #TODO

- # - Navigation: ./writing_nodes.md #TODO

- # - Manipulation: ./writing_nodes.md # TODO

- - Perception: ./perception.md

+ - Perception Tutorial: ./perception.md

+ - Deep Perception: ./deep_perception.md

+ - ArUco Markers: ./aruco_marker_detection.md

+ - Offloading Computation Tutorial: ./remote_compute.md

+ - Autonomy Demos:

+ - Hello World: ./demo_hello_world.md

+ - Grasp Object: ./demo_grasp_object.md

+ - Handover Object: ./demo_handover_object.md

+ - Open Drawer: ./demo_open_drawer.md

+ - Surface Cleaning: ./demo_surface_cleaning.md

+ - FUNMAP Tutorial: ./funmap_tutorial.md

- Examples:

- - Move Stretch with Twist Controller: ./example_1.md

- - Trajectory Control: ./follow_joint_trajectory.md

+ - Voice Teleop: ./voice_teleop.md

+ - Speech to Text: ./speech_to_text.md

+ - Lidar Based Sensing: ./lidar_filtering.md

+ - Custom RViz Marker: ./rviz_markers.md

+ - Effort Value Plotting: ./joint_effort_plotting.md

+ - Tf2 Listener and Broadcaster: ./tf2_transforms.md

+ - Realsense Camera: ./realsense_camera.md

+ - Align to ArUco Tutorial: ./align_to_aruco.md

+ - ArUco Locator: ./aruco_marker_detection#aruco-locator-example

+ - Joint States: ./joint_states.md

+ - Collision Avoidance: ./collision_avoidance.md

- Obstacle Avoidance: ./obstacle_avoider.md

- - Collision Avoidance: ./example_3.md

- - Rviz Markers: ./example_4.md

- - Offloading Computation: ./remote_compute.md

- - Align to ArUco: ./ros2/align_to_aruco.md

- - ArUco Locator: ./example_12.md

- - Joints:

- - Joint States: ./example_5.md

- - Static Transformations: ./example_10.md

- - Joint Effort: ./example_6.md

- - Deep Perception: ./ros2/deep_perception.md

- - Filter Laser Scans: ./example_2.md

- - Realsense Camera: ./example_7.md

- - Microphones: ./example_8.md

- - Voice Teleop: ./example_9.md

+

+

diff --git a/ros2/README.md b/ros2/README.md

index c77faee..7f3a918 100644

--- a/ros2/README.md

+++ b/ros2/README.md

@@ -1,5 +1,16 @@

-# Tutorial Track: Stretch ROS 2 (Beta)

+# Tutorial Track: Stretch ROS 2

+

+This tutorial series covers writing ROS 2 software for Stretch. ROS 2 programs can be written in a variety of programming languages, but this series uses Python. We'll write programs that enable Stretch to navigate autonomously in its environment, manipulate objects with Stretch's gripper, perceive its environment, and much more.

+

+## Prerequisites

+

+Ensure that:

+

+ 1. Your Stretch has the latest robot distribution installed

+ - These tutorials were written for the latest robot distribution. Take a look at the [Distributions & Roadmap](../../software/distributions/) guide to identify your current distribution and upgrade if necessary.

+ 2. You have gone through the [Getting Started Tutorials](../../getting_started/hello_robot/)

+ - If you've never developed with Stretch before or are new to programming, check out the [Developing with Stretch](../../developing/basics/) tutorial series. In particular, the [Using ROS 2 with Stretch](#TODO) tutorial from that series is a good resource for those new to ROS 2.

## Robot Operating System 2 (ROS 2)

@@ -16,23 +27,25 @@ This tutorial track is for users looking to get familiar with programming Stretc

| | Tutorial | Description |

|--|---------------------------------------------------------------------------------|----------------------------------------------------|

-| 1 | [Getting Started](getting_started.md) | Setup instructions for ROS 2 on Stretch|

-| 2 | [Introduction to ROS 2](intro_to_ros2.md.md) | Explore the client library used in ROS2 |

-| 3 | [Introduction to HelloNode](intro_to_hellonode.md) | Explore the Hello Node class to create a ROS2 node for Stretch |

-| 4 | [Teleoperating Stretch](teleoperating_stretch.md) | Control Stretch with a Keyboard or a Gamepad controller. |

-| 5 | [Internal State of Stretch](internal_state_of_stretch.md) | Monitor the joint states of Stretch. |

-| 6 | [RViz Basics](rviz_basics.md) | Visualize topics in Stretch. |

-| 7 | [Nav2 Stack](navigation_overview.md) | Motion planning and control for mobile base. |

-| 8 | [Follow Joint Trajectory Commands](follow_joint_trajectory.md) | Control joints using joint trajectory server. |

-| 9 | [Perception](perception.md) | Use the Realsense D435i camera to visualize the environment. |

-| 10 | [ArUco Marker Detection](aruco_marker_detection.md) | Localize objects using ArUco markers. |

-| 11 | [ReSpeaker Microphone Array](respeaker_mic_array.md) | Learn to use the ReSpeaker Microphone Array. |

-| 12 | [FUNMAP](https://github.com/hello-robot/stretch_ros2/tree/humble/stretch_funmap) | Fast Unified Navigation, Manipulation and Planning. |

-

+| 1 | [Creating your own package, launch files, nodes](writing_nodes.md) | Setup instructions for ROS 2 on Stretch|

+| 2 | [Simulation Tutorial](stretch_simulation.md) | Explore the client library used in ROS2 |

+| 3 | [Teleoperating Stretch](teleoperating_stretch.md) | Control Stretch with a Keyboard or a Gamepad controller. |

+| 4 | [RViz Basics](rviz_basics.md) | Visualize topics in Stretch. |

+| 5 | [Follow Joint Trajectory Commands](follow_joint_trajectory.md) | Control joints using joint trajectory server. |

+| 6 | [Introduction to HelloNode](intro_to_hellonode.md) | Explore the Hello Node class to create a ROS2 node for Stretch |

+| 7 | [Robot Driver](robot_drivers.md) | ROS2 Wrapper for the python API. |

+| 8 | [Twist Control](twist_control.md) | Using Twist messages to control the mobile base. |

+| 9 | [Sensors](sensors_tutorial.md) | Stretch sensors including the ReSpeaker microphone array, IMU, bump sensors, and cliff sensors. |

+| 10 | [Nav2 Stack](navigation_overview.md) | Motion planning and control for mobile base. |

+| 11 | [Perception](perception.md) | Use the Realsense D435i camera to visualize the environment. |

+| 12 | [Deep Perception](deep_perception.md) | Perception using Deep Learning. |

+| 13 | [ArUco Marker Detection](aruco_marker_detection.md) | Localize objects using ArUco markers. |

+| 14 | [Offloading Computation Tutorial](remote_compute.md) | Offloading computationally intensive processes. |

+| 15 | [Avoiding Race Conditions and Deadlocks](avoiding_deadlocks_race_conditions.md) | Learn how to avoid Race Conditions and Deadlocks |

+| 16 | [Autonomy Demos](demo_hello_world.md) | A few demos showcasing Stretch's autonomous capabilities. |

+| 17 | [FUNMAP](https://github.com/hello-robot/stretch_ros2/tree/humble/stretch_funmap) | Fast Unified Navigation, Manipulation and Planning. |

+

+

## Other Examples

To help get you started on your software development, here are examples of nodes to have Stretch perform simple tasks.

@@ -40,20 +53,16 @@ To help get you started on your software development, here are examples of nodes

| | Tutorial | Description |

|---|-------------------------------------------------|----------------------------------------------------|

-| 1 | [Mobile Base Velocity Control](example_1.md) | Use a python script that sends velocity commands. |

-| 2 | [Filter Laser Scans](example_2.md) | Publish new scan ranges that are directly in front of Stretch.|

-| 3 | [Mobile Base Collision Avoidance](example_3.md) | Stop Stretch from running into a wall.|

-| 4 | [Give Stretch a Balloon](example_4.md) | Create a "balloon" marker that goes where ever Stretch goes.|

-| 5 | [Print Joint States](example_5.md) | Print the joint states of Stretch.|

-| 6 | [Store Effort Values](example_6.md) | Print, store, and plot the effort values of the Stretch robot.|

-| 7 | [Capture Image](example_7.md) | Capture images from the RealSense camera data.|

-| 8 | [Voice to Text](example_8.md) | Interpret speech and save transcript to a text file.|

-| 9 | [Voice Teleoperation of Base](example_9.md) | Use speech to teleoperate the mobile base.|

-| 10 | [Tf2 Broadcaster and Listener](example_10.md) | Create a tf2 broadcaster and listener.|

-| 11 | [ArUco Tag Locator](example_12.md) | Actuate the head to locate a requested ArUco marker tag and return a transform.|

+| 1 | [Voice to Text](speech_to_text.md) | Interpret speech and save transcript to a text file.|

+| 2 | [Voice Teleoperation of Base](voice_teleop.md) | Use speech to teleoperate the mobile base.|

+| 3 | [Filter Laser Scans](lidar_filtering.md) | Publish new scan ranges that are directly in front of Stretch.|

+| 4 | [Give Stretch a Balloon](rviz_markers.md) | Create a "balloon" marker that goes where ever Stretch goes.|

+| 5 | [Align to ArUco](align_to_aruco.md) | Detect ArUco fiducials using OpenCV and align to them.|

+| 6 | [ArUco Tag Locator](aruco_locator.md) | Actuate the head to locate a requested ArUco marker tag and return a transform.|

+| 7 | [Print Joint States](joint_states.md) | Print the joint states of Stretch.|

+| 8 | [Store Effort Values](joint_effort_plotting.md) | Print, store, and plot the effort values of the Stretch robot.|

+| 9 | [Tf2 Broadcaster and Listener](tf2_transforms.md) | Create a tf2 broadcaster and listener.|

+| 10 | [Capture Image](realsense_camera.md) | Capture images from the RealSense camera data.|

+| 11 | [Mobile Base Collision Avoidance](collision_avoidance.md) | Stop Stretch from running into a wall.|

| 12 | [Obstacle Avoider](obstacle_avoider.md) | Avoid obstacles using the planar lidar. |

-| 13 | [Align to ArUco](align_to_aruco.md) | Detect ArUco fiducials using OpenCV and align to them.|

-| 14 | [Deep Perception](deep_perception.md) | Use YOLOv5 to detect 3D objects in a point cloud.|

-| 15 | [Avoiding Race Conditions and Deadlocks](avoiding_deadlocks_race_conditions.md) | Learn how to avoid Race Conditions and Deadlocks |

-

diff --git a/ros2/align_to_aruco.md b/ros2/align_to_aruco.md

index 54cf2c4..69ec66a 100644

--- a/ros2/align_to_aruco.md

+++ b/ros2/align_to_aruco.md

@@ -102,7 +102,7 @@ Stretch comes preconfigured to identify ArUco markers. The ROS node that enables

## Computing Transformations

-If you have not already done so, now might be a good time to review the [tf listener](https://docs.hello-robot.com/latest/ros2/example_10/) tutorial. Go on, we can wait...

+If you have not already done so, now might be a good time to review the [tf listener](https://docs.hello-robot.com/latest/ros2/tf2_transforms/) tutorial.

Now that we know how to program stretch to return the transform between known reference frames, we can use this knowledge to compute the transform between the detected marker and the robot's base_link. From its current pose, for Stretch to align itself in front of the marker, we need to command it to reach there. But even before that, we need to program Stretch to know the goal pose. We define the goal pose to be 0.75 meter outward from the marker in the marker negative y-axis (Green axis). This is easier to visualize through the figure below.

diff --git a/ros2/aruco_marker_detection.md b/ros2/aruco_marker_detection.md

index fa28eeb..42e3e77 100644

--- a/ros2/aruco_marker_detection.md

+++ b/ros2/aruco_marker_detection.md

@@ -117,4 +117,464 @@ We select marker ID numbers using the following ranges.

When coming up with this guide, we expected the following:

* Body-mounted accessories with the same ID numbers mounted to different robots could be disambiguated using the expected range of 3D locations of the ArUco markers on the calibrated body.

-* Accessories in the environment with the same ID numbers could be disambiguated using a map or nearby observable features of the environment.

\ No newline at end of file

+* Accessories in the environment with the same ID numbers could be disambiguated using a map or nearby observable features of the environment.

+

+

+

+## ArUco Locator Example

+

+### Modifying Stretch Marker Dictionary YAML File

+When defining the ArUco markers on Stretch, hello robot utilizes a YAML file, [stretch_marker_dict.yaml](https://github.com/hello-robot/stretch_ros2/blob/humble/stretch_core/config/stretch_marker_dict.yaml), that holds the information about the markers. A further breakdown of the YAML file can be found in our [Aruco Marker Detection](aruco_marker_detection.md) tutorial.

+

+Below is what needs to be included in the [stretch_marker_dict.yaml](https://github.com/hello-robot/stretch_ros2/blob/humble/stretch_core/config/stretch_marker_dict.yaml) file so the [detect_aruco_markers](https://github.com/hello-robot/stretch_ros2/blob/humble/stretch_core/stretch_core/detect_aruco_markers.py) node can find the docking station's ArUco tag.

+

+```yaml

+'245':

+ 'length_mm': 88.0

+ 'use_rgb_only': False

+ 'name': 'docking_station'

+ 'link': None

+```

+

+### Running the example

+Begin by running the stretch driver launch file.

+

+```{.bash .shell-prompt}

+ros2 launch stretch_core stretch_driver.launch.py

+```

+

+To activate the RealSense camera and publish topics to be visualized, run the following launch file in a new terminal.

+

+```{.bash .shell-prompt}

+ros2 launch stretch_core d435i_high_resolution.launch.py

+```

+

+Next, run the stretch ArUco launch file which will bring up the [detect_aruco_markers](https://github.com/hello-robot/stretch_ros2/blob/humble/stretch_core/stretch_core/detect_aruco_markers.py) node. In a new terminal, execute:

+

+```{.bash .shell-prompt}

+ros2 launch stretch_core stretch_aruco.launch.py

+```

+

+Within this tutorial package, there is an [RViz config file](https://github.com/hello-robot/stretch_tutorials/blob/humble/rviz/aruco_detector_example.rviz) with the topics for the transform frames in the Display tree. You can visualize these topics and the robot model by running the command below in a new terminal.

+

+```{.bash .shell-prompt}

+ros2 run rviz2 rviz2 -d /home/hello-robot/ament_ws/src/stretch_tutorials/rviz/aruco_detector_example.rviz

+```

+

+Then run the [aruco_tag_locator.py](https://github.com/hello-robot/stretch_tutorials/blob/humble/stretch_ros_tutorials/aruco_tag_locator.py) node. In a new terminal, execute:

+

+```{.bash .shell-prompt}

+cd ament_ws/src/stretch_tutorials/stretch_ros_tutorials/

+python3 aruco_tag_locator.py

+```

+

+

+  +

+

+

+### The Code

+

+```python

+#!/usr/bin/env python3

+

+# Import modules

+import rclpy

+import time

+import tf2_ros

+from tf2_ros import TransformException

+from rclpy.time import Time

+from math import pi

+

+# Import hello_misc script for handling trajectory goals with an action client

+import hello_helpers.hello_misc as hm

+

+# We're going to subscribe to a JointState message type, so we need to import

+# the definition for it

+from sensor_msgs.msg import JointState

+

+# Import the FollowJointTrajectory from the control_msgs.action package to

+# control the Stretch robot

+from control_msgs.action import FollowJointTrajectory

+

+# Import JointTrajectoryPoint from the trajectory_msgs package to define

+# robot trajectories

+from trajectory_msgs.msg import JointTrajectoryPoint

+

+# Import TransformStamped from the geometry_msgs package for the publisher

+from geometry_msgs.msg import TransformStamped

+

+class LocateArUcoTag(hm.HelloNode):

+ """

+ A class that actuates the RealSense camera to find the docking station's

+ ArUco tag and returns a Transform between the `base_link` and the requested tag.

+ """

+ def __init__(self):

+ """

+ A function that initializes the subscriber and other needed variables.

+ :param self: The self reference.

+ """

+ # Initialize the inhereted hm.Hellonode class

+ hm.HelloNode.__init__(self)

+ hm.HelloNode.main(self, 'aruco_tag_locator', 'aruco_tag_locator', wait_for_first_pointcloud=False)

+ # Initialize subscriber

+ self.joint_states_sub = self.create_subscription(JointState, '/stretch/joint_states', self.joint_states_callback, 1)

+ # Initialize publisher

+ self.transform_pub = self.create_publisher(TransformStamped, 'ArUco_transform', 10)

+

+ # Initialize the variable that will store the joint state positions

+ self.joint_state = None

+

+ # Provide the min and max joint positions for the head pan. These values

+ # are needed for sweeping the head to search for the ArUco tag

+ self.min_pan_position = -3.8

+ self.max_pan_position = 1.50

+

+ # Define the number of steps for the sweep, then create the step size for

+ # the head pan joint

+ self.pan_num_steps = 10

+ self.pan_step_size = abs(self.min_pan_position - self.max_pan_position)/self.pan_num_steps

+

+ # Define the min tilt position, number of steps, and step size

+ self.min_tilt_position = -0.75

+ self.tilt_num_steps = 3

+ self.tilt_step_size = pi/16

+

+ # Define the head actuation rotational velocity

+ self.rot_vel = 0.5 # radians per sec

+

+ def joint_states_callback(self, msg):

+ """

+ A callback function that stores Stretch's joint states.

+ :param self: The self reference.

+ :param msg: The JointState message type.

+ """

+ self.joint_state = msg

+

+ def send_command(self, command):

+ '''

+ Handles single joint control commands by constructing a FollowJointTrajectoryGoal

+ message and sending it to the trajectory_client created in hello_misc.

+ :param self: The self reference.

+ :param command: A dictionary message type.

+ '''

+ if (self.joint_state is not None) and (command is not None):

+

+ # Extract the string value from the `joint` key

+ joint_name = command['joint']

+

+ # Set trajectory_goal as a FollowJointTrajectory.Goal and define

+ # the joint name

+ trajectory_goal = FollowJointTrajectory.Goal()

+ trajectory_goal.trajectory.joint_names = [joint_name]

+

+ # Create a JointTrajectoryPoint message type

+ point = JointTrajectoryPoint()

+

+ # Check to see if `delta` is a key in the command dictionary

+ if 'delta' in command:

+ # Get the current position of the joint and add the delta as a

+ # new position value

+ joint_index = self.joint_state.name.index(joint_name)

+ joint_value = self.joint_state.position[joint_index]

+ delta = command['delta']

+ new_value = joint_value + delta

+ point.positions = [new_value]

+

+ # Check to see if `position` is a key in the command dictionary

+ elif 'position' in command:

+ # extract the head position value from the `position` key

+ point.positions = [command['position']]

+

+ # Set the rotational velocity

+ point.velocities = [self.rot_vel]

+

+ # Assign goal position with updated point variable

+ trajectory_goal.trajectory.points = [point]

+

+ # Specify the coordinate frame that we want (base_link) and set the time to be now.

+ trajectory_goal.trajectory.header.stamp = self.get_clock().now().to_msg()

+ trajectory_goal.trajectory.header.frame_id = 'base_link'

+

+ # Make the action call and send the goal. The last line of code waits

+ # for the result

+ self.trajectory_client.send_goal(trajectory_goal)

+

+ def find_tag(self, tag_name='docking_station'):

+ """

+ A function that actuates the camera to search for a defined ArUco tag

+ marker. Then the function returns the pose.

+ :param self: The self reference.

+ :param tag_name: A string value of the ArUco marker name.

+

+ :returns transform: The docking station's TransformStamped message.

+ """

+ # Create dictionaries to get the head in its initial position

+ pan_command = {'joint': 'joint_head_pan', 'position': self.min_pan_position}

+ self.send_command(pan_command)

+ tilt_command = {'joint': 'joint_head_tilt', 'position': self.min_tilt_position}

+ self.send_command(tilt_command)

+

+ # Nested for loop to sweep the joint_head_pan and joint_head_tilt in increments

+ for i in range(self.tilt_num_steps):

+ for j in range(self.pan_num_steps):

+ # Update the joint_head_pan position by the pan_step_size

+ pan_command = {'joint': 'joint_head_pan', 'delta': self.pan_step_size}

+ self.send_command(pan_command)

+

+ # Give time for system to do a Transform lookup before next step

+ time.sleep(0.2)

+

+ # Use a try-except block

+ try:

+ now = Time()

+ # Look up transform between the base_link and requested ArUco tag

+ transform = self.tf_buffer.lookup_transform('base_link',

+ tag_name,

+ now)

+ self.get_logger().info(f"Found Requested Tag: \n{transform}")

+

+ # Publish the transform

+ self.transform_pub.publish(transform)

+

+ # Return the transform

+ return transform

+ except TransformException as ex:

+ continue

+

+ # Begin sweep with new tilt angle

+ pan_command = {'joint': 'joint_head_pan', 'position': self.min_pan_position}

+ self.send_command(pan_command)

+ tilt_command = {'joint': 'joint_head_tilt', 'delta': self.tilt_step_size}

+ self.send_command(tilt_command)

+ time.sleep(0.25)

+

+ # Notify that the requested tag was not found

+ self.get_logger().info("The requested tag '%s' was not found", tag_name)

+

+ def main(self):

+ """

+ Function that initiates the issue_command function.

+ :param self: The self reference.

+ """

+ # Create a StaticTranformBoradcaster Node. Also, start a Tf buffer that

+ # will store the tf information for a few seconds.Then set up a tf listener, which

+ # will subscribe to all of the relevant tf topics, and keep track of the information

+ self.static_broadcaster = tf2_ros.StaticTransformBroadcaster(self)

+ self.tf_buffer = tf2_ros.Buffer()

+ self.listener = tf2_ros.TransformListener(self.tf_buffer, self)

+

+ # Give the listener some time to accumulate transforms

+ time.sleep(1.0)

+

+ # Notify Stretch is searching for the ArUco tag with `get_logger().info()`

+ self.get_logger().info('Searching for docking ArUco tag')

+

+ # Search for the ArUco marker for the docking station

+ pose = self.find_tag("docking_station")

+

+def main():

+ try:

+ # Instantiate the `LocateArUcoTag()` object

+ node = LocateArUcoTag()

+ # Run the `main()` method

+ node.main()

+ node.new_thread.join()

+ except:

+ node.get_logger().info('Interrupt received, so shutting down')

+ node.destroy_node()

+ rclpy.shutdown()

+

+

+if __name__ == '__main__':

+ main()

+```

+

+#### The Code Explained

+Now let's break the code down.

+

+```python

+#!/usr/bin/env python3

+```

+

+Every Python ROS [Node](http://docs.ros.org/en/humble/Tutorials/Beginner-CLI-Tools/Understanding-ROS2-Nodes/Understanding-ROS2-Nodes.html) will have this declaration at the top. The first line makes sure your script is executed as a Python3 script.

+

+```python

+import rclpy

+import time

+import tf2_ros

+from tf2_ros import TransformException

+from rclpy.time import Time

+from math import pi

+

+import hello_helpers.hello_misc as hm

+from sensor_msgs.msg import JointState

+from control_msgs.action import FollowJointTrajectory

+from trajectory_msgs.msg import JointTrajectoryPoint

+from geometry_msgs.msg import TransformStamped

+```

+

+You need to import `rclpy` if you are writing a ROS [Node](http://docs.ros.org/en/humble/Tutorials/Beginner-CLI-Tools/Understanding-ROS2-Nodes/Understanding-ROS2-Nodes.html). Import other python modules needed for this node. Import the `FollowJointTrajectory` from the [control_msgs.action](http://wiki.ros.org/control_msgs) package to control the Stretch robot. Import `JointTrajectoryPoint` from the [trajectory_msgs](https://github.com/ros2/common_interfaces/tree/humble/trajectory_msgs) package to define robot trajectories. The [hello_helpers](https://github.com/hello-robot/stretch_ros2/tree/humble/hello_helpers) package consists of a module that provides various Python scripts used across [stretch_ros](https://github.com/hello-robot/stretch_ros2). In this instance, we are importing the `hello_misc` script.

+

+```python

+def __init__(self):

+ # Initialize the inhereted hm.Hellonode class

+ hm.HelloNode.__init__(self)

+ hm.HelloNode.main(self, 'aruco_tag_locator', 'aruco_tag_locator', wait_for_first_pointcloud=False)

+ # Initialize subscriber

+ self.joint_states_sub = self.create_subscription(JointState, '/stretch/joint_states', self.joint_states_callback, 1)

+ # Initialize publisher

+ self.transform_pub = self.create_publisher(TransformStamped, 'ArUco_transform', 10)

+

+ # Initialize the variable that will store the joint state positions

+ self.joint_state = None

+```

+

+The `LocateArUcoTag` class inherits the `HelloNode` class from `hm` and is instantiated.

+

+Set up a subscriber with `self.create_subscription(JointState, '/stretch/joint_states', self.joint_states_callback, 1)`. We're going to subscribe to the topic `stretch/joint_states`, looking for `JointState` messages. When a message comes in, ROS is going to pass it to the function `joint_states_callback()` automatically.

+

+`self.create_publisher(TransformStamped, 'ArUco_transform', 10)` declares that your node is publishing to the `ArUco_transform` topic using the message type `TransformStamped`. The `10` argument limits the amount of queued messages if any subscriber is not receiving them fast enough.

+

+```python

+self.min_pan_position = -4.10

+self.max_pan_position = 1.50

+self.pan_num_steps = 10

+self.pan_step_size = abs(self.min_pan_position - self.max_pan_position)/self.pan_num_steps

+```

+

+Provide the minimum and maximum joint positions for the head pan. These values are needed for sweeping the head to search for the ArUco tag. We also define the number of steps for the sweep, then create the step size for the head pan joint.

+

+```python

+self.min_tilt_position = -0.75

+self.tilt_num_steps = 3

+self.tilt_step_size = pi/16

+```

+

+Set the minimum position of the tilt joint, the number of steps, and the size of each step.

+

+```python

+self.rot_vel = 0.5 # radians per sec

+```

+

+Define the head actuation rotational velocity.

+

+```python

+def joint_states_callback(self, msg):

+ self.joint_state = msg

+```

+

+The `joint_states_callback()` function stores Stretch's joint states.

+

+```python

+def send_command(self, command):

+ if (self.joint_state is not None) and (command is not None):

+ joint_name = command['joint']

+ trajectory_goal = FollowJointTrajectory.Goal()

+ trajectory_goal.trajectory.joint_names = [joint_name]

+ point = JointTrajectoryPoint()

+```

+

+Assign `trajectory_goal` as a `FollowJointTrajectory.Goal` message type. Then extract the string value from the `joint` key. Also, assign `point` as a `JointTrajectoryPoint` message type.

+

+```python

+if 'delta' in command:

+ joint_index = self.joint_state.name.index(joint_name)

+ joint_value = self.joint_state.position[joint_index]

+ delta = command['delta']

+ new_value = joint_value + delta

+ point.positions = [new_value]

+```

+

+Check to see if `delta` is a key in the command dictionary. Then get the current position of the joint and add the delta as a new position value.

+

+```python

+elif 'position' in command:

+ point.positions = [command['position']]

+```

+

+Check to see if `position` is a key in the command dictionary. Then extract the position value.

+

+```python

+point.velocities = [self.rot_vel]

+trajectory_goal.trajectory.points = [point]

+trajectory_goal.trajectory.header.stamp = self.get_clock().now().to_msg()

+trajectory_goal.trajectory.header.frame_id = 'base_link'

+self.trajectory_client.send_goal(trajectory_goal)

+```

+

+Then `trajectory_goal.trajectory.points` is defined by the positions set in `point`. Specify the coordinate frame that we want (*base_link*) and set the time to be now. Make the action call and send the goal.

+

+```python

+def find_tag(self, tag_name='docking_station'):

+ pan_command = {'joint': 'joint_head_pan', 'position': self.min_pan_position}

+ self.send_command(pan_command)

+ tilt_command = {'joint': 'joint_head_tilt', 'position': self.min_tilt_position}

+ self.send_command(tilt_command)

+```

+

+Create a dictionary to get the head in its initial position for its search and send the commands with the `send_command()` function.

+

+```python

+for i in range(self.tilt_num_steps):

+ for j in range(self.pan_num_steps):

+ pan_command = {'joint': 'joint_head_pan', 'delta': self.pan_step_size}

+ self.send_command(pan_command)

+ time.sleep(0.5)

+```

+

+Utilize a nested for loop to sweep the pan and tilt in increments. Then update the `joint_head_pan` position by the `pan_step_size`. Use `time.sleep()` function to give time to the system to do a Transform lookup before the next step.

+

+```python

+try:

+ now = Time()

+ transform = self.tf_buffer.lookup_transform('base_link',

+ tag_name,

+ now)

+ self.get_logger().info(f"Found Requested Tag: \n{transform}")

+ self.transform_pub.publish(transform)

+ return transform

+except TransformException as ex:

+ continue

+```

+

+Use a try-except block to look up the transform between the *base_link* and the requested ArUco tag. Then publish and return the `TransformStamped` message.

+

+```python

+pan_command = {'joint': 'joint_head_pan', 'position': self.min_pan_position}

+self.send_command(pan_command)

+tilt_command = {'joint': 'joint_head_tilt', 'delta': self.tilt_step_size}

+self.send_command(tilt_command)

+time.sleep(.25)

+```

+

+Begin sweep with new tilt angle.

+

+```python

+def main(self):

+ self.static_broadcaster = tf2_ros.StaticTransformBroadcaster(self)

+ self.tf_buffer = tf2_ros.Buffer()

+ self.listener = tf2_ros.TransformListener(self.tf_buffer, self)

+ time.sleep(1.0)

+```

+

+Create a StaticTranformBoradcaster Node. Also, start a tf buffer that will store the tf information for a few seconds. Then set up a tf listener, which will subscribe to all of the relevant tf topics, and keep track of the information. Include `time.sleep(1.0)` to give the listener some time to accumulate transforms.

+

+```python

+self.get_logger().info('Searching for docking ArUco tag')

+pose = self.find_tag("docking_station")

+```

+

+Notice Stretch is searching for the ArUco tag with a `self.get_logger().info()` function. Then search for the ArUco marker for the docking station.

+

+```python

+def main():

+ try:

+ node = LocateArUcoTag()

+ node.main()

+ node.new_thread.join()

+ except:

+ node.get_logger().info('Interrupt received, so shutting down')

+ node.destroy_node()

+ rclpy.shutdown()

+```

+Instantiate the `LocateArUcoTag()` object and run the `main()` method.

diff --git a/ros2/example_3.md b/ros2/collision_avoidance.md

similarity index 100%

rename from ros2/example_3.md

rename to ros2/collision_avoidance.md

diff --git a/ros2/example_12.md b/ros2/example_12.md

deleted file mode 100644

index 55223cf..0000000

--- a/ros2/example_12.md

+++ /dev/null

@@ -1,458 +0,0 @@

-# ArUco Locator

-For this example, we will send follow joint trajectory commands for the head camera to search and locate an ArUco tag. In this instance, a Stretch robot will try to locate the docking station's ArUco tag.

-

-## Modifying Stretch Marker Dictionary YAML File

-When defining the ArUco markers on Stretch, hello robot utilizes a YAML file, [stretch_marker_dict.yaml](https://github.com/hello-robot/stretch_ros2/blob/humble/stretch_core/config/stretch_marker_dict.yaml), that holds the information about the markers. A further breakdown of the YAML file can be found in our [Aruco Marker Detection](aruco_marker_detection.md) tutorial.

-

-Below is what needs to be included in the [stretch_marker_dict.yaml](https://github.com/hello-robot/stretch_ros2/blob/humble/stretch_core/config/stretch_marker_dict.yaml) file so the [detect_aruco_markers](https://github.com/hello-robot/stretch_ros2/blob/humble/stretch_core/stretch_core/detect_aruco_markers.py) node can find the docking station's ArUco tag.

-

-```yaml

-'245':

- 'length_mm': 88.0

- 'use_rgb_only': False

- 'name': 'docking_station'

- 'link': None

-```

-

-## Getting Started

-Begin by running the stretch driver launch file.

-

-```{.bash .shell-prompt}

-ros2 launch stretch_core stretch_driver.launch.py

-```

-

-To activate the RealSense camera and publish topics to be visualized, run the following launch file in a new terminal.

-

-```{.bash .shell-prompt}

-ros2 launch stretch_core d435i_high_resolution.launch.py

-```

-

-Next, run the stretch ArUco launch file which will bring up the [detect_aruco_markers](https://github.com/hello-robot/stretch_ros2/blob/humble/stretch_core/stretch_core/detect_aruco_markers.py) node. In a new terminal, execute:

-

-```{.bash .shell-prompt}

-ros2 launch stretch_core stretch_aruco.launch.py

-```

-

-Within this tutorial package, there is an [RViz config file](https://github.com/hello-robot/stretch_tutorials/blob/humble/rviz/aruco_detector_example.rviz) with the topics for the transform frames in the Display tree. You can visualize these topics and the robot model by running the command below in a new terminal.

-

-```{.bash .shell-prompt}

-ros2 run rviz2 rviz2 -d /home/hello-robot/ament_ws/src/stretch_tutorials/rviz/aruco_detector_example.rviz

-```

-

-Then run the [aruco_tag_locator.py](https://github.com/hello-robot/stretch_tutorials/blob/humble/stretch_ros_tutorials/aruco_tag_locator.py) node. In a new terminal, execute:

-

-```{.bash .shell-prompt}

-cd ament_ws/src/stretch_tutorials/stretch_ros_tutorials/

-python3 aruco_tag_locator.py

-```

-

-

-  -

-

-

-### The Code

-

-```python

-#!/usr/bin/env python3

-

-# Import modules

-import rclpy

-import time

-import tf2_ros

-from tf2_ros import TransformException

-from rclpy.time import Time

-from math import pi

-

-# Import hello_misc script for handling trajectory goals with an action client

-import hello_helpers.hello_misc as hm

-

-# We're going to subscribe to a JointState message type, so we need to import

-# the definition for it

-from sensor_msgs.msg import JointState

-

-# Import the FollowJointTrajectory from the control_msgs.action package to

-# control the Stretch robot

-from control_msgs.action import FollowJointTrajectory

-

-# Import JointTrajectoryPoint from the trajectory_msgs package to define

-# robot trajectories

-from trajectory_msgs.msg import JointTrajectoryPoint

-

-# Import TransformStamped from the geometry_msgs package for the publisher

-from geometry_msgs.msg import TransformStamped

-

-class LocateArUcoTag(hm.HelloNode):

- """

- A class that actuates the RealSense camera to find the docking station's

- ArUco tag and returns a Transform between the `base_link` and the requested tag.

- """

- def __init__(self):

- """

- A function that initializes the subscriber and other needed variables.

- :param self: The self reference.

- """

- # Initialize the inhereted hm.Hellonode class

- hm.HelloNode.__init__(self)

- hm.HelloNode.main(self, 'aruco_tag_locator', 'aruco_tag_locator', wait_for_first_pointcloud=False)

- # Initialize subscriber

- self.joint_states_sub = self.create_subscription(JointState, '/stretch/joint_states', self.joint_states_callback, 1)

- # Initialize publisher

- self.transform_pub = self.create_publisher(TransformStamped, 'ArUco_transform', 10)

-

- # Initialize the variable that will store the joint state positions

- self.joint_state = None

-

- # Provide the min and max joint positions for the head pan. These values

- # are needed for sweeping the head to search for the ArUco tag

- self.min_pan_position = -3.8

- self.max_pan_position = 1.50

-

- # Define the number of steps for the sweep, then create the step size for

- # the head pan joint

- self.pan_num_steps = 10

- self.pan_step_size = abs(self.min_pan_position - self.max_pan_position)/self.pan_num_steps

-

- # Define the min tilt position, number of steps, and step size

- self.min_tilt_position = -0.75

- self.tilt_num_steps = 3

- self.tilt_step_size = pi/16

-

- # Define the head actuation rotational velocity

- self.rot_vel = 0.5 # radians per sec

-

- def joint_states_callback(self, msg):

- """

- A callback function that stores Stretch's joint states.

- :param self: The self reference.

- :param msg: The JointState message type.

- """

- self.joint_state = msg

-

- def send_command(self, command):

- '''

- Handles single joint control commands by constructing a FollowJointTrajectoryGoal

- message and sending it to the trajectory_client created in hello_misc.

- :param self: The self reference.

- :param command: A dictionary message type.

- '''

- if (self.joint_state is not None) and (command is not None):

-

- # Extract the string value from the `joint` key

- joint_name = command['joint']

-

- # Set trajectory_goal as a FollowJointTrajectory.Goal and define

- # the joint name

- trajectory_goal = FollowJointTrajectory.Goal()

- trajectory_goal.trajectory.joint_names = [joint_name]

-

- # Create a JointTrajectoryPoint message type

- point = JointTrajectoryPoint()

-

- # Check to see if `delta` is a key in the command dictionary

- if 'delta' in command:

- # Get the current position of the joint and add the delta as a

- # new position value

- joint_index = self.joint_state.name.index(joint_name)

- joint_value = self.joint_state.position[joint_index]

- delta = command['delta']

- new_value = joint_value + delta

- point.positions = [new_value]

-

- # Check to see if `position` is a key in the command dictionary

- elif 'position' in command:

- # extract the head position value from the `position` key

- point.positions = [command['position']]

-

- # Set the rotational velocity

- point.velocities = [self.rot_vel]

-

- # Assign goal position with updated point variable

- trajectory_goal.trajectory.points = [point]

-

- # Specify the coordinate frame that we want (base_link) and set the time to be now.

- trajectory_goal.trajectory.header.stamp = self.get_clock().now().to_msg()

- trajectory_goal.trajectory.header.frame_id = 'base_link'

-

- # Make the action call and send the goal. The last line of code waits

- # for the result

- self.trajectory_client.send_goal(trajectory_goal)

-

- def find_tag(self, tag_name='docking_station'):

- """

- A function that actuates the camera to search for a defined ArUco tag

- marker. Then the function returns the pose.

- :param self: The self reference.

- :param tag_name: A string value of the ArUco marker name.

-

- :returns transform: The docking station's TransformStamped message.

- """

- # Create dictionaries to get the head in its initial position

- pan_command = {'joint': 'joint_head_pan', 'position': self.min_pan_position}

- self.send_command(pan_command)

- tilt_command = {'joint': 'joint_head_tilt', 'position': self.min_tilt_position}

- self.send_command(tilt_command)

-

- # Nested for loop to sweep the joint_head_pan and joint_head_tilt in increments

- for i in range(self.tilt_num_steps):

- for j in range(self.pan_num_steps):

- # Update the joint_head_pan position by the pan_step_size

- pan_command = {'joint': 'joint_head_pan', 'delta': self.pan_step_size}

- self.send_command(pan_command)

-

- # Give time for system to do a Transform lookup before next step

- time.sleep(0.2)

-

- # Use a try-except block

- try:

- now = Time()

- # Look up transform between the base_link and requested ArUco tag

- transform = self.tf_buffer.lookup_transform('base_link',

- tag_name,

- now)

- self.get_logger().info(f"Found Requested Tag: \n{transform}")

-

- # Publish the transform

- self.transform_pub.publish(transform)

-

- # Return the transform

- return transform

- except TransformException as ex:

- continue

-

- # Begin sweep with new tilt angle

- pan_command = {'joint': 'joint_head_pan', 'position': self.min_pan_position}

- self.send_command(pan_command)

- tilt_command = {'joint': 'joint_head_tilt', 'delta': self.tilt_step_size}

- self.send_command(tilt_command)

- time.sleep(0.25)

-

- # Notify that the requested tag was not found

- self.get_logger().info("The requested tag '%s' was not found", tag_name)

-

- def main(self):

- """

- Function that initiates the issue_command function.

- :param self: The self reference.

- """

- # Create a StaticTranformBoradcaster Node. Also, start a Tf buffer that

- # will store the tf information for a few seconds.Then set up a tf listener, which

- # will subscribe to all of the relevant tf topics, and keep track of the information

- self.static_broadcaster = tf2_ros.StaticTransformBroadcaster(self)

- self.tf_buffer = tf2_ros.Buffer()

- self.listener = tf2_ros.TransformListener(self.tf_buffer, self)

-

- # Give the listener some time to accumulate transforms

- time.sleep(1.0)

-

- # Notify Stretch is searching for the ArUco tag with `get_logger().info()`

- self.get_logger().info('Searching for docking ArUco tag')

-

- # Search for the ArUco marker for the docking station

- pose = self.find_tag("docking_station")

-

-def main():

- try:

- # Instantiate the `LocateArUcoTag()` object

- node = LocateArUcoTag()

- # Run the `main()` method

- node.main()

- node.new_thread.join()

- except:

- node.get_logger().info('Interrupt received, so shutting down')

- node.destroy_node()

- rclpy.shutdown()

-

-

-if __name__ == '__main__':

- main()

-```

-

-### The Code Explained

-Now let's break the code down.

-

-```python

-#!/usr/bin/env python3

-```

-

-Every Python ROS [Node](http://docs.ros.org/en/humble/Tutorials/Beginner-CLI-Tools/Understanding-ROS2-Nodes/Understanding-ROS2-Nodes.html) will have this declaration at the top. The first line makes sure your script is executed as a Python3 script.

-

-```python

-import rclpy

-import time

-import tf2_ros

-from tf2_ros import TransformException

-from rclpy.time import Time

-from math import pi

-

-import hello_helpers.hello_misc as hm

-from sensor_msgs.msg import JointState

-from control_msgs.action import FollowJointTrajectory

-from trajectory_msgs.msg import JointTrajectoryPoint

-from geometry_msgs.msg import TransformStamped

-```

-

-You need to import `rclpy` if you are writing a ROS [Node](http://docs.ros.org/en/humble/Tutorials/Beginner-CLI-Tools/Understanding-ROS2-Nodes/Understanding-ROS2-Nodes.html). Import other python modules needed for this node. Import the `FollowJointTrajectory` from the [control_msgs.action](http://wiki.ros.org/control_msgs) package to control the Stretch robot. Import `JointTrajectoryPoint` from the [trajectory_msgs](https://github.com/ros2/common_interfaces/tree/humble/trajectory_msgs) package to define robot trajectories. The [hello_helpers](https://github.com/hello-robot/stretch_ros2/tree/humble/hello_helpers) package consists of a module that provides various Python scripts used across [stretch_ros](https://github.com/hello-robot/stretch_ros2). In this instance, we are importing the `hello_misc` script.

-

-```python

-def __init__(self):

- # Initialize the inhereted hm.Hellonode class

- hm.HelloNode.__init__(self)

- hm.HelloNode.main(self, 'aruco_tag_locator', 'aruco_tag_locator', wait_for_first_pointcloud=False)

- # Initialize subscriber

- self.joint_states_sub = self.create_subscription(JointState, '/stretch/joint_states', self.joint_states_callback, 1)

- # Initialize publisher

- self.transform_pub = self.create_publisher(TransformStamped, 'ArUco_transform', 10)

-

- # Initialize the variable that will store the joint state positions

- self.joint_state = None

-```

-

-The `LocateArUcoTag` class inherits the `HelloNode` class from `hm` and is instantiated.

-

-Set up a subscriber with `self.create_subscription(JointState, '/stretch/joint_states', self.joint_states_callback, 1)`. We're going to subscribe to the topic `stretch/joint_states`, looking for `JointState` messages. When a message comes in, ROS is going to pass it to the function `joint_states_callback()` automatically.

-

-`self.create_publisher(TransformStamped, 'ArUco_transform', 10)` declares that your node is publishing to the `ArUco_transform` topic using the message type `TransformStamped`. The `10` argument limits the amount of queued messages if any subscriber is not receiving them fast enough.

-

-```python

-self.min_pan_position = -4.10

-self.max_pan_position = 1.50

-self.pan_num_steps = 10

-self.pan_step_size = abs(self.min_pan_position - self.max_pan_position)/self.pan_num_steps

-```

-

-Provide the minimum and maximum joint positions for the head pan. These values are needed for sweeping the head to search for the ArUco tag. We also define the number of steps for the sweep, then create the step size for the head pan joint.

-

-```python

-self.min_tilt_position = -0.75

-self.tilt_num_steps = 3

-self.tilt_step_size = pi/16

-```

-

-Set the minimum position of the tilt joint, the number of steps, and the size of each step.

-

-```python

-self.rot_vel = 0.5 # radians per sec

-```

-

-Define the head actuation rotational velocity.

-

-```python

-def joint_states_callback(self, msg):

- self.joint_state = msg

-```

-

-The `joint_states_callback()` function stores Stretch's joint states.

-

-```python

-def send_command(self, command):

- if (self.joint_state is not None) and (command is not None):

- joint_name = command['joint']

- trajectory_goal = FollowJointTrajectory.Goal()

- trajectory_goal.trajectory.joint_names = [joint_name]

- point = JointTrajectoryPoint()

-```

-

-Assign `trajectory_goal` as a `FollowJointTrajectory.Goal` message type. Then extract the string value from the `joint` key. Also, assign `point` as a `JointTrajectoryPoint` message type.

-

-```python

-if 'delta' in command:

- joint_index = self.joint_state.name.index(joint_name)

- joint_value = self.joint_state.position[joint_index]

- delta = command['delta']

- new_value = joint_value + delta

- point.positions = [new_value]

-```

-

-Check to see if `delta` is a key in the command dictionary. Then get the current position of the joint and add the delta as a new position value.

-

-```python

-elif 'position' in command:

- point.positions = [command['position']]

-```

-

-Check to see if `position` is a key in the command dictionary. Then extract the position value.

-

-```python

-point.velocities = [self.rot_vel]

-trajectory_goal.trajectory.points = [point]

-trajectory_goal.trajectory.header.stamp = self.get_clock().now().to_msg()

-trajectory_goal.trajectory.header.frame_id = 'base_link'

-self.trajectory_client.send_goal(trajectory_goal)

-```

-

-Then `trajectory_goal.trajectory.points` is defined by the positions set in `point`. Specify the coordinate frame that we want (*base_link*) and set the time to be now. Make the action call and send the goal.

-

-```python

-def find_tag(self, tag_name='docking_station'):

- pan_command = {'joint': 'joint_head_pan', 'position': self.min_pan_position}

- self.send_command(pan_command)

- tilt_command = {'joint': 'joint_head_tilt', 'position': self.min_tilt_position}

- self.send_command(tilt_command)

-```

-

-Create a dictionary to get the head in its initial position for its search and send the commands with the `send_command()` function.

-

-```python

-for i in range(self.tilt_num_steps):

- for j in range(self.pan_num_steps):

- pan_command = {'joint': 'joint_head_pan', 'delta': self.pan_step_size}

- self.send_command(pan_command)

- time.sleep(0.5)

-```

-

-Utilize a nested for loop to sweep the pan and tilt in increments. Then update the `joint_head_pan` position by the `pan_step_size`. Use `time.sleep()` function to give time to the system to do a Transform lookup before the next step.

-

-```python

-try:

- now = Time()

- transform = self.tf_buffer.lookup_transform('base_link',

- tag_name,

- now)

- self.get_logger().info(f"Found Requested Tag: \n{transform}")

- self.transform_pub.publish(transform)

- return transform

-except TransformException as ex:

- continue

-```

-

-Use a try-except block to look up the transform between the *base_link* and the requested ArUco tag. Then publish and return the `TransformStamped` message.

-

-```python

-pan_command = {'joint': 'joint_head_pan', 'position': self.min_pan_position}

-self.send_command(pan_command)

-tilt_command = {'joint': 'joint_head_tilt', 'delta': self.tilt_step_size}

-self.send_command(tilt_command)

-time.sleep(.25)

-```

-

-Begin sweep with new tilt angle.

-

-```python

-def main(self):

- self.static_broadcaster = tf2_ros.StaticTransformBroadcaster(self)

- self.tf_buffer = tf2_ros.Buffer()

- self.listener = tf2_ros.TransformListener(self.tf_buffer, self)

- time.sleep(1.0)

-```

-

-Create a StaticTranformBoradcaster Node. Also, start a tf buffer that will store the tf information for a few seconds. Then set up a tf listener, which will subscribe to all of the relevant tf topics, and keep track of the information. Include `time.sleep(1.0)` to give the listener some time to accumulate transforms.

-

-```python

-self.get_logger().info('Searching for docking ArUco tag')

-pose = self.find_tag("docking_station")

-```

-

-Notice Stretch is searching for the ArUco tag with a `self.get_logger().info()` function. Then search for the ArUco marker for the docking station.

-

-```python

-def main():

- try:

- node = LocateArUcoTag()

- node.main()

- node.new_thread.join()

- except:

- node.get_logger().info('Interrupt received, so shutting down')

- node.destroy_node()

- rclpy.shutdown()

-```

-Instantiate the `LocateArUcoTag()` object and run the `main()` method.

diff --git a/ros2/funmap_tutorial.md b/ros2/funmap_tutorial.md

new file mode 100644

index 0000000..c0a3c59

--- /dev/null

+++ b/ros2/funmap_tutorial.md

@@ -0,0 +1,6 @@

+# FUNMAP Tutorial

+

+{%

+ include-markdown "https://raw.githubusercontent.com/hello-robot/stretch_ros2/refs/heads/humble/stretch_funmap/README.md"

+ rewrite-relative-urls=false

+%}

\ No newline at end of file

diff --git a/ros2/gazebo_basics.md b/ros2/gazebo_basics.md

deleted file mode 100644

index 4e60eac..0000000

--- a/ros2/gazebo_basics.md

+++ /dev/null

@@ -1,37 +0,0 @@

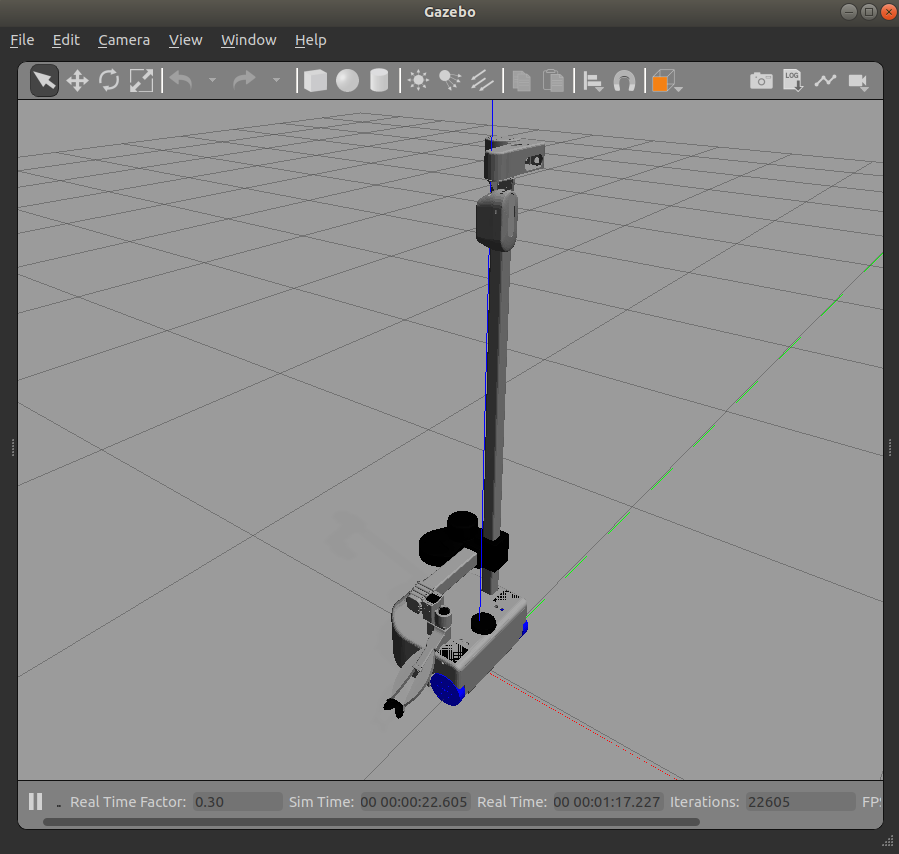

-# Spawning Stretch in Simulation (Gazebo)

-

-!!! note

- ROS 2 tutorials are still under active development.

-

-!!! note

- Simulation support for Stretch in ROS 2 is under active development. Please reach out to us if you want to work with Stretch in a simulated environment like Gazebo/Ignition in ROS 2.

-

-Refer to the instructions below if you want to test this functionality in ROS 1.

-

-### Empty World Simulation

-To spawn the Stretch in gazebo's default empty world run the following command in your terminal.

-

-```{.bash .shell-prompt}

-roslaunch stretch_gazebo gazebo.launch

-```

-

-This will bringup the robot in the gazebo simulation similar to the image shown below.

-

-

-

-

-### Custom World Simulation

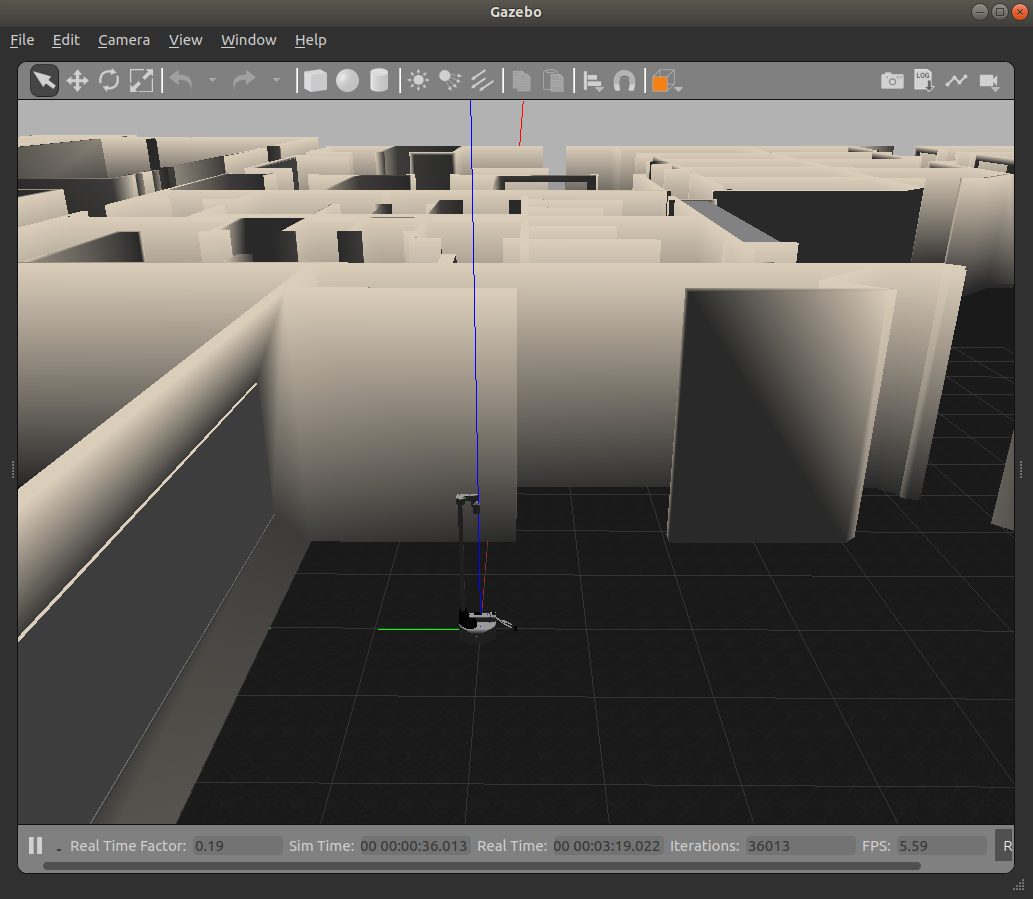

-In gazebo, you are able to spawn Stretch in various worlds. First, source the gazebo world files by running the following command in a terminal

-

-```{.bash .shell-prompt}

-echo "source /usr/share/gazebo/setup.sh"

-```

-

-Then using the world argument, you can spawn the stretch in the willowgarage world by running the following

-

-```{.bash .shell-prompt}

-roslaunch stretch_gazebo gazebo.launch world:=worlds/willowgarage.world

-```

-

-

-

diff --git a/ros2/getting_started.md b/ros2/getting_started.md

index 1ce4f93..b2aa57d 100644

--- a/ros2/getting_started.md

+++ b/ros2/getting_started.md

@@ -1,27 +1,3 @@

-# Getting Started

-

-This tutorial series covers writing ROS 2 software for Stretch. ROS 2 programs can be written in a variety of programming languages, but this series uses Python. We'll write programs that enable Stretch to navigate autonomously in its environment, manipulate objects with Stretch's gripper, perceive its environment, and much more.

-

-## Prerequisites

-

-Ensure that:

-

- 1. Your Stretch has the latest robot distribution installed

- - These tutorials were written for the latest robot distribution. Take a look at the [Distributions & Roadmap](../../software/distributions/) guide to identify your current distribution and upgrade if necessary.

- 2. You are comfortable developing with Stretch

- - If you've never developed with Stretch before or are new to programming, check out the [Developing with Stretch](../../developing/basics/) tutorial series. In particular, the [Using ROS 2 with Stretch](#TODO) tutorial from that series is a good resource for those new to ROS 2.

-

-

+{%

+ include-markdown "./README.md"

+%}

\ No newline at end of file

diff --git a/ros2/intro_to_ros2.md b/ros2/intro_to_ros2.md

index 37fb250..470a39e 100644

--- a/ros2/intro_to_ros2.md

+++ b/ros2/intro_to_ros2.md

@@ -1,4 +1,5 @@

-# Introduction to ROS 2

+# Introduction to ROS 2 and the python client library (rclpy)

+

In this tutorial we will explore rclpy, the client library for interacting with ROS 2 using the Python API. The rclpy library forms the base of ROS 2 and you will notice that all tutorials in the following sections will use it. In this section we will focus on a few common constructs of rclpy and then follow some examples using the IPython interpreter to get familiar with them.

## IPython

@@ -163,3 +164,97 @@ print("Received response: {}".format(req_future.result().sum))

### rclpy.spin_until_future_complete()

Notice that the spin method manifests itself as the spin_until_future_complete() method which takes the node, future and timeout_sec as the arguments. The future is an object in ROS 2 that’s returned immediately after an async service call has been made. We can then wait on the result of this future. This way the call to the service is not blocking and the code execution can continue as soon as the service call is issued.

+

+

+## Understanding ROS_DOMAIN_ID

+

+### What is ROS_DOMAIN_ID?

+

+ROS_DOMAIN_ID is an environment variable that determines which DDS (Data Distribution Service) domain your ROS2 nodes will communicate on. Think of it as a "channel" or "network ID" - only nodes with the same ROS_DOMAIN_ID can discover and communicate with each other.

+

+This is particularly useful in environments where:

+- Multiple robots or ROS2 systems are running on the same network

+- Multiple users are developing ROS2 applications simultaneously

+- You want to isolate your ROS2 communication from other systems

+

+The ROS_DOMAIN_ID can be any integer from 0 to 101 (some values may be reserved depending on your DDS implementation). The default value is 0.

+

+### Why is ROS_DOMAIN_ID Important?

+

+When working with Stretch robots, setting the correct ROS_DOMAIN_ID is crucial because:

+

+1. **Multi-robot environments**: If you have multiple Stretch robots on the same network, each should use a different ROS_DOMAIN_ID to prevent cross-talk

+2. **Shared networks**: In lab or classroom settings, different users' ROS2 nodes won't interfere with each other if they use different domain IDs

+3. **Debugging**: You can isolate your development environment from production systems

+

+### Setting ROS_DOMAIN_ID in the Terminal

+

+To set the ROS_DOMAIN_ID for the current terminal session only, use the `export` command:

+

+```{.bash .shell-prompt}

+export ROS_DOMAIN_ID=42

+```

+

+You can verify it's set correctly:

+

+```{.bash .shell-prompt}

+echo $ROS_DOMAIN_ID

+```

+

+This setting will only persist for the current terminal session. Once you close the terminal, the setting is lost.

+

+!!! warning

+ All terminals where you run ROS2 nodes that need to communicate with each other must have the same ROS_DOMAIN_ID set. If you launch the Stretch driver in one terminal with `ROS_DOMAIN_ID=42` and try to run your node in another terminal with `ROS_DOMAIN_ID=0`, they won't be able to see each other!

+

+### Setting ROS_DOMAIN_ID Permanently in ~/.bashrc

+

+To avoid setting the ROS_DOMAIN_ID every time you open a new terminal, you can add it to your `~/.bashrc` file. This file is executed automatically every time you open a new terminal.

+

+Add the following line to your `~/.bashrc`:

+

+```{.bash .shell-prompt}

+echo "export ROS_DOMAIN_ID=42" >> ~/.bashrc

+```

+

+Or manually edit the file:

+

+```{.bash .shell-prompt}

+nano ~/.bashrc

+```

+

+Add this line at the end of the file (replace 42 with your desired domain ID):

+

+```bash

+export ROS_DOMAIN_ID=42

+```

+

+Save and exit (in nano: Ctrl+X, then Y, then Enter).

+

+To apply the changes to your current terminal without closing it:

+

+```{.bash .shell-prompt}

+source ~/.bashrc

+```

+

+!!! tip

+ When working with a Stretch robot, it's a good practice to set the same ROS_DOMAIN_ID in the `~/.bashrc` file on both your development machine and the robot itself. This ensures consistent communication across all terminals and reboots.

+

+### Checking Your Current ROS_DOMAIN_ID

+

+You can always check what ROS_DOMAIN_ID is currently set:

+

+```{.bash .shell-prompt}

+echo $ROS_DOMAIN_ID

+```

+

+If this returns an empty line, it means ROS_DOMAIN_ID is not set, and ROS2 will use the default value of 0.

+

+### Example: Using ROS_DOMAIN_ID with Multiple Robots

+

+If you're working in a lab with multiple Stretch robots, you might organize them like this:

+

+- **Stretch Robot 1**: `ROS_DOMAIN_ID=10`

+- **Stretch Robot 2**: `ROS_DOMAIN_ID=11`

+- **Stretch Robot 3**: `ROS_DOMAIN_ID=12`

+

+Each robot and its corresponding development machine would have the same domain ID set in their `~/.bashrc` files.

diff --git a/ros2/example_6.md b/ros2/joint_effort_plotting.md

similarity index 100%

rename from ros2/example_6.md

rename to ros2/joint_effort_plotting.md

diff --git a/ros2/example_5.md b/ros2/joint_states.md

similarity index 99%

rename from ros2/example_5.md

rename to ros2/joint_states.md

index 43aed76..cfeb6a9 100644

--- a/ros2/example_5.md

+++ b/ros2/joint_states.md

@@ -1,4 +1,5 @@

## Example 5

+

In this example, we will review a Python script that prints out the positions of a selected group of Stretch joints. This script is helpful if you need the joint positions after you teleoperated Stretch with the Xbox controller or physically moved the robot to the desired configuration after hitting the run stop button.

If you are looking for a continuous print of the joint states while Stretch is in action, then you can use the [ros2 topic command-line tool](https://docs.ros.org/en/humble/Tutorials/Beginner-CLI-Tools/Understanding-ROS2-Topics/Understanding-ROS2-Topics.html) shown in the [Internal State of Stretch Tutorial](https://github.com/hello-robot/stretch_tutorials/blob/master/ros2/internal_state_of_stretch.md).

diff --git a/ros2/example_2.md b/ros2/lidar_filtering.md

similarity index 99%

rename from ros2/example_2.md

rename to ros2/lidar_filtering.md

index 675c83c..da251de 100644

--- a/ros2/example_2.md

+++ b/ros2/lidar_filtering.md

@@ -1,5 +1,6 @@

## Example 2

+

!!! note

ROS 2 tutorials are still under active development.

diff --git a/ros2/perception.md b/ros2/perception.md

deleted file mode 100644

index 6a5c789..0000000

--- a/ros2/perception.md

+++ /dev/null

@@ -1,48 +0,0 @@

-## Perception Introduction

-

-The Stretch robot is equipped with the [Intel RealSense D435i camera](https://www.intelrealsense.com/depth-camera-d435i/), an essential component that allows the robot to measure and analyze the world around it. In this tutorial, we are going to showcase how to visualize the various topics published by the camera.

-

-Begin by running the stretch `driver.launch.py` file.

-

-```{.bash .shell-prompt}

-ros2 launch stretch_core stretch_driver.launch.py

-```

-

-To activate the [RealSense camera](https://www.intelrealsense.com/depth-camera-d435i/) and publish topics to be visualized, run the following launch file in a new terminal.

-

-```{.bash .shell-prompt}

-ros2 launch stretch_core d435i_low_resolution.launch.py

-```

-

-Within this tutorial package, there is an [RViz config file](https://github.com/hello-robot/stretch_tutorials/blob/noetic/rviz/perception_example.rviz) with the topics for perception already in the Display tree. You can visualize these topics and the robot model by running the command below in a new terminal.

-

-```{.bash .shell-prompt}

-ros2 run rviz2 rviz2 -d /home/hello-robot/ament_ws/src/stretch_tutorials/rviz/perception_example.rviz

-```

-

-### PointCloud2 Display

-

-A list of displays on the left side of the interface can visualize the camera data. Each display has its properties and status that notify a user if topic messages are received.

-

-For the `PointCloud2` display, a [sensor_msgs/pointCloud2](http://docs.ros.org/en/lunar/api/sensor_msgs/html/msg/PointCloud2.html) message named `/camera/depth/color/points` is received and the GIF below demonstrates the various display properties when visualizing the data.

-

-

-  -

-

-

-### Image Display

-The `Image` display when toggled creates a new rendering window that visualizes a [sensor_msgs/Image](http://docs.ros.org/en/lunar/api/sensor_msgs/html/msg/Image.html) messaged, */camera/color/image_raw*. This feature shows the image data from the camera; however, the image comes out sideways.

-

-

-  -

-

-

-### DepthCloud Display

-The `DepthCloud` display is visualized in the main RViz window. This display takes in the depth image and RGB image provided by RealSense to visualize and register a point cloud.

-

-

-  -

-

-

-## Deep Perception

-Hello Robot also has a ROS package that uses deep learning models for various detection demos. A link to the tutorials is provided: [stretch_deep_perception](https://docs.hello-robot.com/latest/ros2/deep_perception/).

diff --git a/ros2/example_7.md b/ros2/realsense_camera.md

similarity index 100%

rename from ros2/example_7.md

rename to ros2/realsense_camera.md

diff --git a/ros2/robot_drivers.md b/ros2/robot_drivers.md

index 90df82d..8e1c6c4 100644

--- a/ros2/robot_drivers.md

+++ b/ros2/robot_drivers.md

@@ -94,25 +94,3 @@ ros2 service call /stretch/stow_the_robot

ros2 service call /stretch/stop_the_robot

# TODO - verify commands and include outputs

```

-

-## Lidar Driver

-

-Rplidar Spinning Lidar

-

-## Camera Drivers

-

-Realsense Cameras

-

-### Head Camera

-

-### Eye in Hand Camera

-

-### Fisheye Navigation Camera

-

-## Mic Array Driver

-

-Respeaker Microphone Array

-

-## IMUs

-

-## Cliff Sensors

diff --git a/ros2/rviz_basics.md b/ros2/rviz_basics.md

index 8ee1260..a112f4a 100644

--- a/ros2/rviz_basics.md

+++ b/ros2/rviz_basics.md

@@ -1,8 +1,5 @@

## Visualizing with RViz

-!!! note

- ROS 2 tutorials are still under active development.

-

You can utilize RViz to visualize Stretch's sensor information. To begin, run the stretch driver launch file.

```{.bash .shell-prompt}

@@ -24,3 +21,52 @@ If you want to visualize Stretch's [tf transform tree](http://wiki.ros.org/rviz/

There are further tutorials for RViz that can be found [here](http://wiki.ros.org/rviz/Tutorials).

+

+## Visualizing camera data

+

+The Stretch robot is equipped with the [Intel RealSense D435i camera](https://www.intelrealsense.com/depth-camera-d435i/), an essential component that allows the robot to measure and analyze the world around it. In this tutorial, we are going to showcase how to visualize the various topics published by the camera.

+

+Begin by running the stretch `driver.launch.py` file.

+

+```{.bash .shell-prompt}

+ros2 launch stretch_core stretch_driver.launch.py

+```

+

+To activate the [RealSense camera](https://www.intelrealsense.com/depth-camera-d435i/) and publish topics to be visualized, run the following launch file in a new terminal.

+

+```{.bash .shell-prompt}

+ros2 launch stretch_core d435i_low_resolution.launch.py

+```

+

+Within this tutorial package, there is an [RViz config file](https://github.com/hello-robot/stretch_tutorials/blob/noetic/rviz/perception_example.rviz) with the topics for perception already in the Display tree. You can visualize these topics and the robot model by running the command below in a new terminal.

+

+```{.bash .shell-prompt}

+ros2 run rviz2 rviz2 -d /home/hello-robot/ament_ws/src/stretch_tutorials/rviz/perception_example.rviz

+```

+

+## PointCloud2 Display

+

+A list of displays on the left side of the interface can visualize the camera data. Each display has its properties and status that notify a user if topic messages are received.

+

+For the `PointCloud2` display, a [sensor_msgs/pointCloud2](http://docs.ros.org/en/lunar/api/sensor_msgs/html/msg/PointCloud2.html) message named `/camera/depth/color/points` is received and the GIF below demonstrates the various display properties when visualizing the data.

+

+

+  +

+

+

+## Image Display

+The `Image` display when toggled creates a new rendering window that visualizes a [sensor_msgs/Image](http://docs.ros.org/en/lunar/api/sensor_msgs/html/msg/Image.html) messaged, */camera/color/image_raw*. This feature shows the image data from the camera; however, the image comes out sideways.

+

+

+  +

+

+

+## DepthCloud Display

+The `DepthCloud` display is visualized in the main RViz window. This display takes in the depth image and RGB image provided by RealSense to visualize and register a point cloud.

+

+

+  +

+

+

+## Deep Perception

+Hello Robot also has a ROS package that uses deep learning models for various detection demos. A link to the tutorials is provided: [stretch_deep_perception](https://docs.hello-robot.com/latest/ros2/deep_perception/).

diff --git a/ros2/example_4.md b/ros2/rviz_markers.md

similarity index 99%

rename from ros2/example_4.md

rename to ros2/rviz_markers.md

index 9dd0c78..2bec6b9 100644

--- a/ros2/example_4.md

+++ b/ros2/rviz_markers.md

@@ -1,5 +1,6 @@

## Example 4

+

!!! note

ROS 2 tutorials are still under active development.

diff --git a/ros2/sensors_tutorial.md b/ros2/sensors_tutorial.md

new file mode 100644

index 0000000..e94ab47

--- /dev/null

+++ b/ros2/sensors_tutorial.md

@@ -0,0 +1,60 @@

+# Sensors Tutorial

+

+This tutorial covers how to work with Stretch's various sensors including the ReSpeaker microphone array, IMU, bump sensors, and cliff sensors.

+

+## ReSpeaker Microphone Array

+

+Stretch comes equipped with a [ReSpeaker Mic Array v2.0](https://wiki.seeedstudio.com/ReSpeaker_Mic_Array_v2.0/) that provides audio input capabilities.

+

+

+  +

+

+

+### Basic ReSpeaker Usage

+

+To start using the ReSpeaker microphone array, launch the ReSpeaker node:

+

+```{.bash .shell-prompt}

+ros2 launch respeaker_ros2 respeaker.launch.py

+```

+

+This will start publishing audio data and speech recognition results to various topics.

+

+### Available Topics

+

+The ReSpeaker publishes to several topics:

+

+- `/audio` - Raw audio data

+- `/speech_to_text` - Recognized speech text

+- `/sound_direction` - Direction of detected sound

+- `/is_speeching` - Boolean indicating if speech is detected

+

+For detailed information about ReSpeaker topics, see the [ReSpeaker Topics](respeaker_topics.md) tutorial.

+

+## IMU Sensor

+

+Stretch includes an IMU (Inertial Measurement Unit) that provides orientation and motion data.

+

+### Accessing IMU Data

+

+The IMU data is published to the `/stretch/imu` topic as `sensor_msgs/Imu` messages.

+

+```{.bash .shell-prompt}

+ros2 topic echo /stretch/imu

+```

+

+## Bump Sensors

+

+Stretch has bump sensors that detect physical contact with obstacles.

+

+## Cliff Sensors

+

+Cliff sensors help prevent Stretch from falling off edges or down stairs.

+

+

+## Sensors Examples

+

+- Explore the [Speech to Text](speech_to_text.md) tutorial for advanced audio processing

+- Learn about [Voice Teleop](voice_teleop.md) for voice-controlled robot operation

+- Check out the [ReSpeaker Mic Array](respeaker_mic_array.md) tutorial for detailed microphone setup

+

diff --git a/ros2/example_8.md b/ros2/speech_to_text.md

similarity index 99%

rename from ros2/example_8.md

rename to ros2/speech_to_text.md

index 7ab62b3..3a7b80d 100644

--- a/ros2/example_8.md

+++ b/ros2/speech_to_text.md

@@ -1,4 +1,4 @@

-# Example 8

+## Example 8

This example will showcase how to save the interpreted speech from Stretch's [ReSpeaker Mic Array v2.0](https://wiki.seeedstudio.com/ReSpeaker_Mic_Array_v2.0/) to a text file.

diff --git a/ros2/stretch_simulation.md b/ros2/stretch_simulation.md

new file mode 100644

index 0000000..c097bd8

--- /dev/null

+++ b/ros2/stretch_simulation.md

@@ -0,0 +1,562 @@

+# Stretch Simulation Tutorial

+

+This tutorial will guide you through setting up and using the Stretch robot simulation in ROS2.

+

+Note: You do not need to follow this tutorial if you are running on a Stretch robot.

+

+## What You'll Learn

+

+By the end of this tutorial, you'll be able to:

+

+- Install and configure the Stretch simulation environment

+

+- Launch the simulated robot in various environments

+

+- Control the robot using keyboard teleop and web interface

+

+- Use the simulation for navigation and mapping

+

+- Integrate the simulation with your own ROS2 applications

+

+## Prerequisites

+

+Before starting this tutorial, you should have:

+

+- Basic familiarity with Linux command line

+

+- Understanding of ROS2 concepts (nodes, topics, services). If you're new to ROS2 concepts, we recommend first reading through the [Introduction to ROS2](intro_to_ros2.md) tutorial.

+

+- A computer meeting the system requirements below

+

+## System Requirements

+

+**Operating System**: Ubuntu 22.04 (recommended) or WSL2 with GPU acceleration

+

+**Hardware Requirements**:

+- **Minimum**: 16GB RAM, Nvidia graphics card

+

+- **Recommended**: 32GB RAM, dedicated GPU

+

+**Why these requirements?** The simulation uses Mujoco physics engine with 3D rendering, which requires significant computational resources and GPU acceleration for smooth performance.

+

+## Installation Guide

+

+### Step 1: Install ROS2 Humble (10 minutes)

+

+> **Note**: Skip this step if you're running on a Stretch robot, as ROS2 is already installed.

+

+```bash

+# Add universe repository for additional packages

+sudo apt install software-properties-common

+sudo add-apt-repository universe

+

+# Install curl for downloading ROS2 keys

+sudo apt update && sudo apt install curl -y

+

+# Add ROS2 official repository